From Weekend Prototype to Market Opportunity: Building an AI Teaching Assistant

My daughter was struggling with tests. She'd ace assignments and labs, but when quiz day came, her grades dropped.

Being an active AI user myself, I suggested she try something: drop her class materials into Gemini and ask it to generate practice quizzes. It worked - her next test grade jumped significantly.

That got me thinking: what if this wasn't just a parenting hack? What if it could work at scale in online education?

Picture this: you're taking an online course, something's not clicking, and you're about to give up. Instead of Googling random explanations that may or may not match your curriculum, you get help right there - tailored to your actual course materials. Flash cards if you're visual. Audio summaries if you learn by listening. Practice quizzes targeting exactly what you're struggling with.

And if you're too overwhelmed or shy to ask? The system detects your struggle - you failed the quiz on the first attempt, you've rewatched that video three times - and proactively offers help.

That's how this started.

The Context

I've been taking online courses for years. Completed many, abandoned more. It's shockingly easy to drop a course - even one you paid for - once you hit a wall.

You get stuck on a concept. Try to push through. Fall further behind. Life gets busy. Going back feels overwhelming. Eventually you convince yourself you didn't need it anyway and disappear.

The stats confirm this isn't just my experience: 40-60% of students drop their online courses. And online education companies know this. They try to build student support systems, but it's nearly impossible to scale when you have hundreds of students, each struggling with different topics at different times.

Online education isn't going anywhere - it's too convenient. But effectiveness? That's the problem we haven't solved.

The Product

The solution is middleware that sits on top of any LMS and actually pays attention.

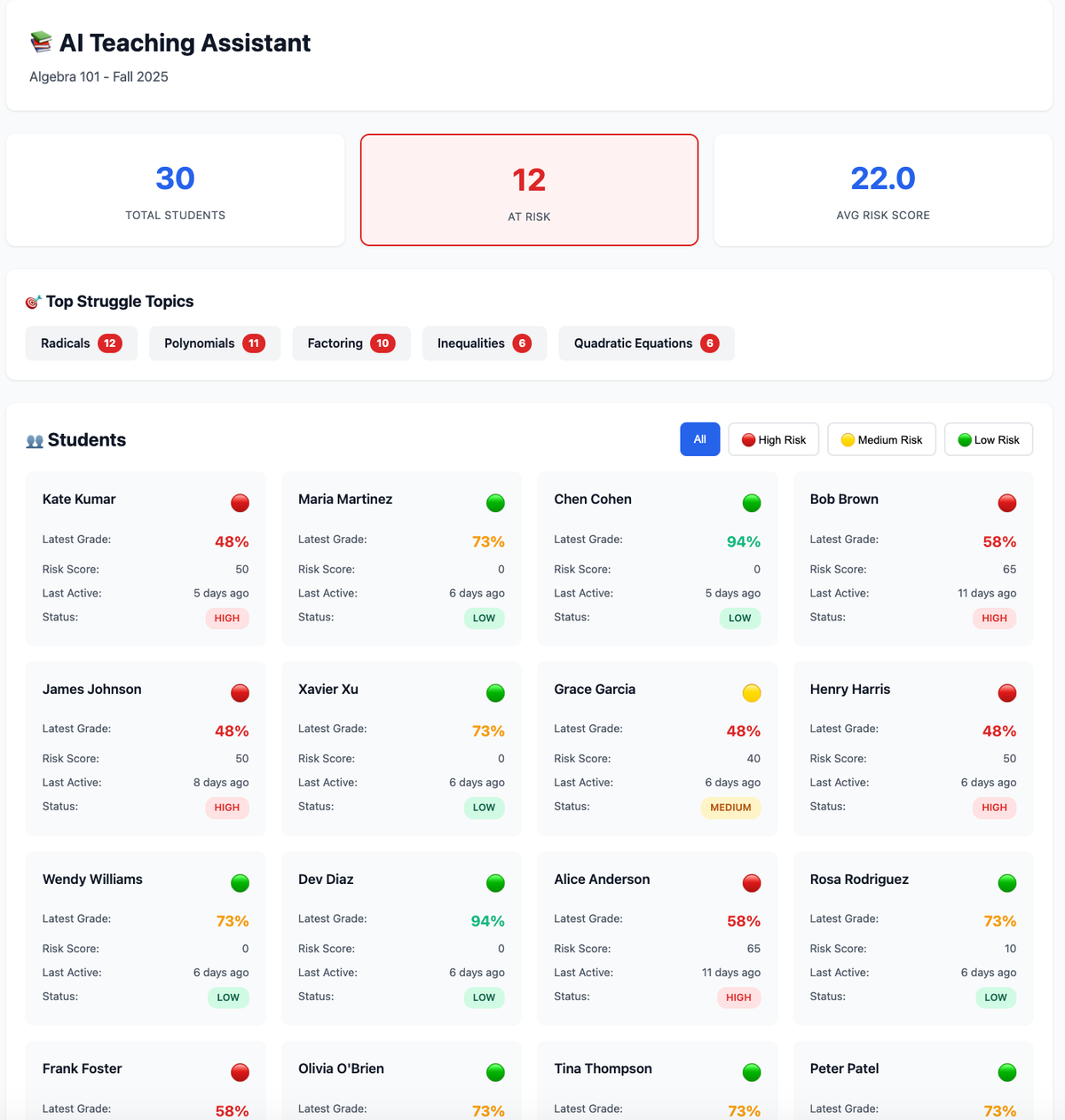

It tracks behavior patterns: Are grades declining? How many times did the student rewatch that lecture? How many quiz attempts before passing? How engaged are they with discussions?

When the system detects struggle, it does two things: notifies the instructor and offers help to the student. The instructor sees class-wide patterns - which students are struggling, which topics are causing problems, where intervention is needed. The student gets personalized study aids generated from their actual course materials, not generic web content.

This gives instructors actionable intelligence instead of just data, and gives students support at the moment they need it, not weeks later when it's too late.

The Gap

Current LMS platforms are good at what they were built for: storing content, tracking submissions, calculating grades. They're digital filing cabinets with gradebooks attached.

What they're missing is intelligence. They know what students submit, not what students understand. They record grades after the fact but can't predict who's about to fail. They store course materials in one-size-fits-all formats without any personalization.

The data exists - every click, every submission, every rewatch, every quiz attempt. But it sits unused in databases, generating reports that instructors don't have time to analyze and insights that come too late to matter.

The gap isn't better AI features bolted onto existing tools. It's a fundamental intelligence layer that makes educational data actually useful for learning, not just tracking.

The Vision

This isn't about building another LMS platform. It's about building the intelligence layer that works with all of them.

A middleware service that plugs into Canvas, Blackboard, Moodle - wherever the data lives - and adds intelligence without changing anyone's workflow. Instructors don't learn new tools. Students don't switch platforms. The system just makes their existing data work harder.

What This Series Covers

Over the next several posts, I'm breaking down what it would actually take to turn this prototype into a real product.

Post 2: The Technical Architecture - How does middleware actually integrate with Canvas, Blackboard, Moodle? What AI components handle personalization? What gets built first versus later?

Post 3: The Market Analysis - Who else is working on this problem? What are they missing? Who are the actual customers - institutions or individual instructors?

Post 4: The Business Model - What pricing makes sense? SaaS subscription per student? White-label licensing? How do you actually get into educational institutions?

Post 5: What It Would Take to Build This - Team composition, capital requirements, regulatory considerations like FERPA, timeline from MVP to first customers.

Post 6: Why I'm Serious About This - What I bring to the table, what I'm looking for in co-founders or advisors, and how to get involved.

Each post works standalone, but together they paint the complete picture of turning a weekend prototype into a viable business.

The Question

I built this demo because I saw a problem that affected my own family. Now I'm trying to figure out if it's a problem worth solving at scale.

If you're an instructor frustrated with dropout rates, an EdTech professional who's thought about this problem, or someone who's built middleware products before - I want to hear from you. What am I missing? What would actually make this useful versus just technically interesting?

GitHub link to the AI Teaching Assistant prototype: https://github.com/was1paulina/ai-teaching-assistant-prototype

Next post: The Technical Architecture - how this middleware layer actually works under the hood.